Creating an effective and portable VNF testing framework with end-to-end automation

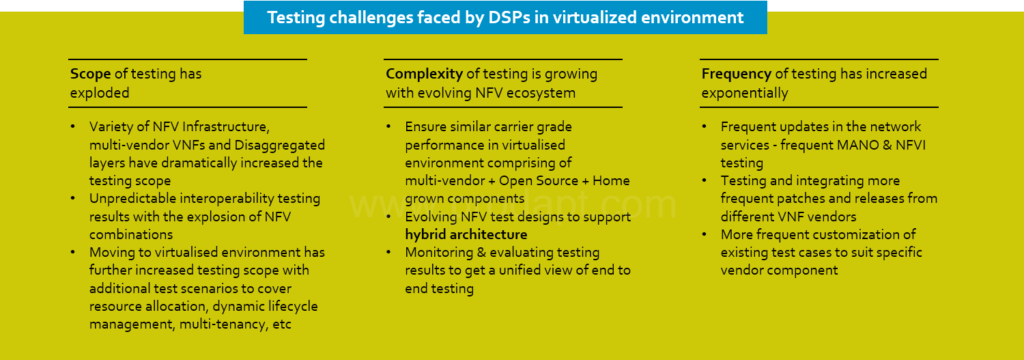

More service providers in the connectedness industry are rapidly introducing new services online, to enhance customer satisfaction and provide better service. However, service providers face several testing challenges in terms of scope, complexity, and frequency during the delivery process. Almost 40% of the service provider’s time and efforts are consumed in testing activities. Virtual Network Function (VNF) Testing in the connectedness industry has become complex, costly and time- consuming. To address the testing challenges faced by service providers in virtualized environments, existing testing methods and processes must be revised and shifted to a new testing model.

This Insight encompasses the key elements that can help service providers to create an effective and portable VNF testing framework with end-to-end automation. The key benefits include rapid test development and maintenance, faster product launch, improved product quality, and differentiated product delivery.

Accelerate service rollout time by 78% with redesigned VNF testing framework.